Many thanks to SWLing Post contributor, 13dka, who shares the following guest post:

Revisiting the Belka’s “pseudo-sync detector”: A sync detector crash course!

Revisiting the Belka’s “pseudo-sync detector”: A sync detector crash course!

by 13dka

“It’s usually hard to assess whether or not a sync detector helped with a particular dip in the signal or not, unless you have 2 samples of the same radio to record their output simultaneously and compare.”*

That’s what I wrote about the “pseudo sync detector” in my review of the Belka DSP last year.

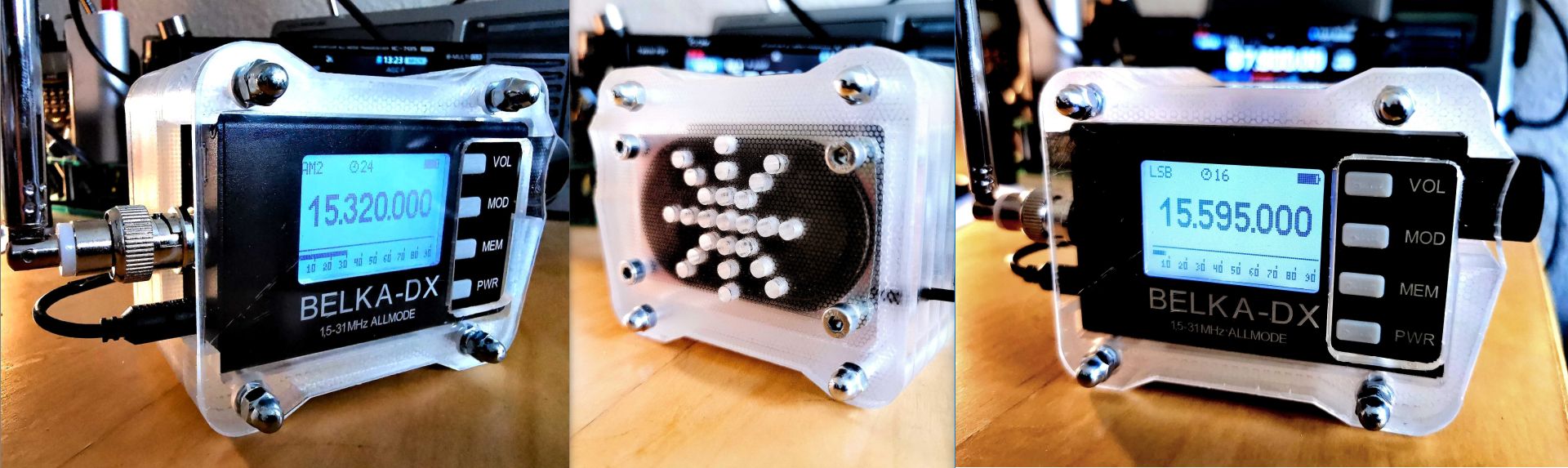

Since I was recently upgrading to the Belka DX in order to pass on the Belka DSP to a friend, I had briefly two examples of almost the same radio on the table at the dike. I tuned them to the same stations and recorded some audio clips with one radio on sync detector, the other in regular AM mode, to answer the question whether or not sync has “helped with a particular dip in the signal”. Then I thought that demonstration would be an opportunity to try an explanation on what exactly (I think) sync detectors are all about anyway, hoping to find a middle ground between “technical” and “dumbed down beyond recognition”.

The trouble with sync detectors

Perhaps no component of a shortwave receiver is surrounded by so much misconception and confusion as sync detectors. Full disclosure: Until quite recently, I had an, at best, vague concept on what they do myself. It seems it’s not so much that people don’t know how they work, what they actually do when they work is where the ideas often diverge. Contributing factors might be that explanations on what problem sync detectors are supposed to solve are usually high-level theoretical and just as hard to digest as the explanations on how they solve that problem, and a few old magazine reviews and now internet buzz probably did their own to misguide or inflate expectations instead of explaining when to turn them on.

The outcome is that sync detectors tend to disappoint: I have often read things like “I turned it on but I couldn’t hear a difference” because people turn them on when there’s nothing for the circuit to do and then they think they do nothing, or they believe their purpose is “magically recovering weak signals” and get disappointed by the mixed results. Contrary to what some YT videos state, sync detectors are not “great when a signal fades a lot” (that’s what the automatic gain control is meant to keep in check) and no, they were not “invented by Sony”.

A very brief history of sync detectors

From the very beginning of radio broadcasting, people noticed that their favorite non-local stations were usually sounding just fine during the day (if they came in at all) and in the early evening, but not so great anymore when the family gathered around the radio on long, dark winter evenings or at night (on the soon to become popular shortwave even during the day). It didn’t take long until the sheer wonder of wireless entertainment wore off enough that listeners and engineers started asking why the tone of these stations was changing continuously and even worse, why some of them exhibited not only fading but some recurring and sometimes very nasty distortion. That’s why the first concepts on addressing these issues by somehow “exalting the carrier” started to emerge as early as 1922, and it took 30-50 more years until these early ideas evolved into the sync detectors we know today.

Not only in this historical context it seems obvious that dealing with the well-known old problem outlined above is (or was) basically all they were supposed to do. But by the time the theories could materialize in practical and affordable circuits, FM and TV were the all the rage and the downmarket didn’t care that much for AM broadcasting anymore. Apart from a few professional receivers and ham radio projects, sync detectors didn’t catch on in the consumer radio world. Until the 1970s, when they started to be marketed to demanding shortwave listeners – in usually quite technical or vague and hence confusing ways, and somehow the focus started shifting:

Because they occasionally do recover DX stations buried in the mud to some very varying extent and under some pretty elusive circumstances, this (dare I say) byproduct of the concepts understandably became a sales pitch and often also the one benchmark used to rate sync detectors in reviews, also understandably because rating the elusive thing they were meant to do is difficult.

A “selective fading” crash course

Most of us have learned that “multipath propagation” is the source of the trouble, because it causes…

Selective fading

The difference between fading and “selective fading” is that the latter affects only portions of the signal within the part of the spectrum a station occupies, hence the name. The somewhat unlucky term may have contributed to the confusion too, because (frequency-) selective fading and fading are two completely independent phenomena that can happen both separately and simultaneously! Still, a lot of explanations on the net and even very technical articles often lump them together… Anyway, still very abstract, right?

Why not just have a radio showing us what selective fading is? Here is a “waterfall” plot of a signal, showing the history of a station’s (HF-) spectrum over a span of several seconds, the station is subject to selective fading (and in the middle of the clip also regular fading):

For comparison, here is a waterfall plot of the same kind of signal that is not harmed by selective fading, but exhibits regular fading:

The slanted blue streaks wandering through the modulation spectrum in the first video are simply notches in the signal level, or if you will they are narrow slices of the spectrum “fading” while the neigboring parts remain unharmed. These notches come into existence because portions of the waves take different paths (hence “multipath propagation”) and travel a different distance than others and so they all hit your antenna at very slightly different times. When all these signal portions sum up in your radio they are causing “phase cancellations” resulting in the notches and hence colorations and distortions.

Bonus fact: Due to the ionosphere moving at its own pace and changing its shape and altitude (among a lot more variables) all the time, and the planet underneath stubbornly insisting on doing the same thing every day, all non-groundwave paths are also subject to a minuscule Doppler shift, that’s why these notches are not static but keep moving through the spectrum of your station’s channel in all kinds of speeds, directions and amounts.

I used the (“STANAG”) radioteletype signals because they have a very homogenous spectrum that makes the effect very obvious (this can also be seen very well on OTH radar or DRM stations). Now that you know what to look for, you will likely recognize the pattern on this AM broadcast station:

Please note how the signal is not subject to much regular fading but suffers a lot from selective fading, you can also faintly hear how it distorts when the notches pass the carrier peak in the middle of the spectrum. What, you’re still here? 🙂 Then let’s see in some more detail how “selective fading” affects signal quality:

1. “Phase distortion”

The notches wandering through the sidebands (modulation part) of the spectrum cause the usually slowly undulating, “phasing” sound, as if someone is constantly playing with the tone controls… you probably know what I’m talking about, it’s the very trademark “shortwave sound” some of us actually love. It’s not a very destructive effect yet, electric guitar players use effect pedals called “phaser” to recreate a very similar sound in a somewhat similar way and indeed, this kind of “distortion” is becoming most obvious when listening to music. But since it’s altering the tonal quality of the original signal it’s technically considered a distortion.

A sync detector can’t help much with that specific sound. Why I’m even mentioning it: It’s the very same mechanism that periodically wreaks havoc on AM modulation:

2. Carrier attenuation

The notches will sooner or later pass the carrier signal peak in the middle of the AM station’s spectrum and attenuate the carrier to some degree. When that happens, depending on the depth or intensity of these notches, the signal may sound “overmodulated” in shades between “a little scratchy” and “just plain nasty”. “Overmodulation” is what’s actually happening: Attenuating the carrier has the same effect as increasing the modulation level. Since it’s rather the ratio between carrier and modulation than overall signal strength causing this nasty effect, it can render even strong stations pretty unlistenable.

This is where the ideas of “exalting” and even replacing the carrier come from, and that’s also the source of aforementioned misunderstanding: The “replacement carrier” is not static, it needs to follow the modulation level, therefore it can’t do anything about regular fading.

What it sounds like and how sync detectors help with that

Here’s a clip with a musical intro (that’s Radio Thailand on 9920 kHz). I duplicated the first few notes of the music and play them twice in a row, first the recording in AM mode and then with the Belka’s sync detector. Note how prickly the piano sounds in the first example and how the distortion disappears in the “sync” part of the clip (0:04s):

This is also very noticeable on speech: Here’s another clip from the same program, note the sound of the word “architecture” and the scratchy sounding sibilants on the following words, then the music part with the last hit of the snare drum (0:06s) sounding like the drummer threw his sticks into a china store. The sync detector is smoothing that all out (0:08s). Isn’t that awesome?

I think this is pretty much the one simple thing that sync detectors were meant to do and what likely most sync detectors are doing just fine: They subtly remove this specific kind of distortion, ideally without adding their own colorations or artifacts. So when you hear some station with some sweet music that turns into heavy metal in intervals, that’s when you definitely want to turn these things on.

Weak signal recovery

While the described improvement can be achieved very consistently on signals with fair to strong levels, recovering weak stations or even “extreme DX” is where much of the “sometimes it works, sometimes it doesn’t” talk and the disappointment seems to come from. Any sync detector reaches a point where it stops working of course, where that point is depends on a number of factors:

– The type and performance of the sync detector. Duh! Even sync detectors of the same type can perform very differently due to their individual implementation. The now most common type of sync detector is the “selectable sideband”-style one, as found on the ICF-2001D/2010 or the NRD-525/535, and now e.g. any Tecsun radio with sync. The Sony’s sync detector performance is said to be “legendary”, the implementation confusingly called “ECSS” on the JRC radios ended up, let’s say “controversial” (that likely has to do with their price tags) and the results of the various Tecsun implementations are genuinely all over the place. But even the Sony’s legendary sync mode failed almost as often as it succeeded on weak signals in my personal recollection, and I’m only now beginning to understand why.

– Dynamic range and sensitivity. How “weak” a signal is depends to one part on the sensitivity and noise figure of the radio and a sync detector relies on what the radio in front of it can deliver. Food for thought: The aforementioned Sony ICF-2010/2001D was not only known to have a great sync detector, it also had a reputation of being particularly sensitive and quiet for the standards of the time. The next point is a very related matter:

– Whether or not additional interference is deteriorating reception. Besides the now ubiquitous wideband noise simply reducing practical sensitivity, this could be co-channel interference (another station or deliberate jamming) or local narrowband noise. Then the sync detector may try to improve reception of the station and the interference, and make the interference pop out more than the station. Here’s a pretty fatal example – this was captured with the sync detector of the PL-660, but the effect is not specific to that radio:

Speaking of which, I would’ve loved to demonstrate the virtues of “true” and the difference to “pseudo” sync detectors. Unfortunately I only have an old (2015) PL-660 and opposing the two radios simply did not work at all, which mostly has to do with the 2 points described above, the radios are just too different in performance:

– How much the weak station is actually suffering selective fading. First off, the same is true for strong stations: If there is no selective fading, the sync detector will (ideally) do nothing. When signals are just weak, noisy and subject to regular fading, a sync detector can’t help with that per se. Case in point: This is a medium weak signal from VOA on the 19m-band being mostly affected by regular fading and very little by selective fading. The sync detector just removes the residue bit of distortion and it’s pretty much impossible to tell the difference:

A better way of telling the difference is a stereo file, the radio with regular AM on the left and the sync one on the right channel – the signal stays mono in the middle for the most part, the differences will stick out to the sides:

An even better way is the “null test” – summing both channels in mono and flipping polarity on one – which is “nulling” (cancelling out) all common components of the signal and leaving only the difference:

Obviously, showing that something does nothing can be more complicated than demonstrating the opposite! For comparison, here’s a strong station (Radio Korea in German) with some moderate selective fading, as a stereo file and then “nulled out”. The less difference between the radio’s demodulation product, the quieter the difference will be. Obviously, the sync detector has something to do here:

Can sync detectors recover weak signals at all?

First off, all sync detectors can improve the signal-to-noise ratio a little, the “selectable sideband” variety often changes the filter and less bandwidth means better SNR, on top of that they let you select the sideband that’s least affected by noise or adjacent channel splatter, so the combined efforts of filtering and sideband selection can already improve weak signal reception. Removing selective fading distortion on top of that can make that a “magic button” indeed! Except…since everything changes constantly on shortwave, it can turn into the opposite seconds or minutes later, when the station decides to deliver less than the minimum required signal to keep the sync detector locked onto the carrier. In theory, the “true” sync detector doesn’t even need a carrier but so far I haven’t met an actual implementation that is capable of riding out a “carrierless” period enough to not wander off frequency. So moderately weak signals, actually affected by selective fading can reliably benefit from a sync detector. Absolute grassroots signals: not so much.

Back to the Belka: The Belka’s sync detector is different in that it uses both sidebands like (AFAIK) the circuits in e.g. the AOR 7030 or the IC-R75, and it doesn’t use a phase locking loop (PLL) to sync an internal carrier. Instead, it filters the original carrier and sends it through a limiting amplifier to stabilize it, in the tradition of venerable radios made by Harris, Racal and Drake (R-7). The British rather called it “quasi-” than “pseudo-“-synchronous detector and by the way, as I just found out – it’s also what the NRD-525/535 receivers do (permanently!) when they are in AM mode. While this quasi-synchronous detector doesn’t let you select sidebands, it does help a little with weak signals too, if all factors fall into the right place:

This is a weak signal from “Radio ZP30” (HCJB) on 3995 kHz. On the regular AM example playing first you may notice that the station is dipping into the noise for a second, the “sync” example has only a very brief dropout and the signal appears a little less noisy and more solid in general:

Here’s what it sounds like when it fails as much as it helps – and that’s exactly the point I’m trying to make – on the same station, a few minutes earlier or later. When the carrier drops below the noise, nothing can be recovered, the Belka goes silent where a “true” sync detector would lose lock and howl, also some interference messes with the signal:

Intelligibility

Here’s an example how a sync detector can salvage intelligibility on a weak station (here: Woofferton on 17700 kHz in a closing band). The first part of the clip sounded to me like “..and the next poll? Paul? pole? cold?, versions 29 and 34…” and I was a bit surprised to hear what was actually being said in the second part (0:04s):

If you have interference on one sideband, you can still use “zero-beating” in SSB (aka “ECSS”) with any SSB-capable radio. On the Belka this is particularly easy: If the station is well-maintained no fine-tuning is necessary, due to the very precise and stable (<0.5ppm!) oscillator (yes, it boggles the mind again but it actually has a TCXO inside). The Belkas come well-calibrated and generally spot-on zero over the entire coverage range to begin with, and the calibration on my 1-year old -DSP and the new -DX model on my table was approximately 1/4 of one Hertz apart! So on a radio like this, it’s most times “switch to LSB/USB” and that’s it. The technical difference to a sideband-selecting “true” sync detector is that the latter is zero-beating the station automatically and very precisely, but that automatic is usually also its Achilles heel. ECSS doesn’t have this problem, the signal actually doesn’t need a carrier and the result is the same. Here’s a rare example where the distortion periods are so long that switching forth and back between AM and SSB in the middle of it reveals the effect very clearly (Absolute Radio 1215 kHz on the Icom IC-705):

Summary:

Sync detectors address mostly one very specific problem with AM reception: The ugly audio distortion caused by selective fading reducing the carrier to the equivalent of an overmodulated signal.

Their real value is in keeping programs enjoyable for the program listener down to fairly low signals, and very few sync detectors fail at doing this. Recovering truly weak stations is a bonus with a high variance in the results, which is only partially owed to the quality or sophistication of the sync detector circuits. It also depends on external factors and it’s generally limited to mitigating the detrimental effects of selective fading for the most part, skipping all the little side benefits like “removing phase noise from the whatnot thingamajig…yada” here. With regard to the casual broadcast listener, this is probably all there really is to the “magic button”.

Final thoughts about the Belka’s “pseudo sync detector”

After recording these clips and doing more necessary research on sync detectors I found that the little Belka has tricked me once again into underrating its inner qualities. The “pseudo”-prefix in its name may do its own to cause doubts about its effectiveness – quite unjustly! It just silently and very effectively keeps the distortion away and doesn’t change the sound much otherwise, where other sync detectors make clear that they’re on by changing bandwidth/filter shape or volume, turning a light on or malfunctioning right away.

I think the “quasi”- or “pseudo-synchronous” principle it is based on is not as inferior compared to “true” sync detectors as the theory suggests: Where PLL-sync detectors would start howling while trying to re-sync, the Belka mutes the audio briefly and due to its outstanding sensitivity, the sync detector likely stops working at a point further down on the signal meter scale than many other radios would, particularly in this price range.

The sync detector doesn’t have selectable sidebands, but the Belka has all the precision needed for – more often than not – hassle-free zero-beating in SSB without the need to beat zeroes at all, so that’s just as good to me. Overall it’s doing these jobs with the deceivingly big portion of understatement that’s so typical for this tiny communications receiver.

What an abslutely fantastic article! I now understand a lot more about how sync-detection/ECSS works, which is great as my Belka DX is in transit to me!

Thanks again 13DKA!!

Brian Mi7khz

Thank you! Happy I could help, and I hope you enjoy your Belka! 🙂

Thanks so much for pointing out these things with examples!

As a HAM radio man, owning true SSB communications transceivers, I think that it is just the same thing as switching the receiver to SSB and zero beat the station carrier. These synchronous detectors just try to do the same thing a bit more conveniently to the users, some of them not used to SSB at all. But they are doing so by loosing the ability to sync on weak signals and this is so important!

Well of course zero beating any AM station as you tube the dial is not very convenient (listening all these carriers sweeping) but you can always use AM, scan the band and then switch to SSB to hear the station of interest. With modern transceivers that are DDS-based you should be fine, as both the transceiver and the station are dead stable in frequency. Modern transceivers also, have the ability to vary the bandwidth on SSB up to several KHz, so an AM station high audio frequencies are not lost.

Hello.great article on sync .d. if you would like a very good example of sync performance for under 100 dollars buy a tecsun 680 and you will be glad you did. I have the Sony 2010 and the Grundig 800 mill. And the tecsun works gust as well if not better. Once again. Thanks for a great article. Ron. Z.

The biggest problem with sync detection is the way they were marketed and sold. Many listeners came to expect “magic bullets” from them, when in reality, they are simple tools. I have two radios with it. the Sony ICF-2010, and the Grundig Satellit 800 Millennium. I have never found the 2010 to be what some call today to be the “Jesus Radio”. It’s a good radio, not a great one. It’s a portable. Occasionally, sync detect has helped pull out stations from the mud, as it did for me once when I pulled in WSM from Nashville, here in Massachusetts. I have used it on the Satellit 800 for slightly better fidelity. But, the wrong way to use this feature, for example, is how one YouTube presenter used it, leaving sync detect always on. That is just wrong, and misleading for many starting out in the hobby.

Hi Arthur,

“The biggest problem with sync detection is the way they were marketed and sold.”

That’s exactly what I had in mind when I started writing this article, and a corresponding statement survived edits for quite a while. But I like to check my facts and so I sifted through old brochures and magazine ads from Sony, Grundig, Drake, JRC…. and they were all short and sober, technical or just vague – not a trace of hype.

So how did I (and obviously I’m not alone!) got this impression? I’ was an avid reader of domestic radio magazines in the 80s and 90s and I’m pretty sure that some reviews in them may have initiated he hype, likely some shop ads, too but at the end it probably got its momentum only in the minds of the customers. 🙂

I used to think Sync was an absolute must because portable receivers had no other choice to make greatly fading shortwave AM signals sound better. I have been playing around more with my AirSpy HF+ and SDR#, which lets you use LSB or USB up to 12k bandwidth. Been enjoying the stronger stations playing music quite noticeably as a much more pleasant experience! Who needs Sync (which some have stated just makes the background more noisy)?

I am still waiting for a portable radio that can do this on SSB with wide bandwidth and very accurate tuning down to the Hz so I can fine tune it myself – without having to lug around a computer to use it. I will not buy another portable until this happens. The bandwidth on the Belka is too narrow on SSB, so for me, not worth getting yet. You also cannot record to a micro-USB memory card yet either.

Bonjour me conseillez vous d’acheter ce récepteur écoute radios diffusion et mode digitaux , + A1A/cw

Cordiales 73 s Lionel

Hi, not sure I (well, Google :)) understood you correctly but you can buy the Belka here:

https://belrig.by/

merci a vous bonnne semaine

Bonsoir Lionel

j’ai acheté ce récepteur il y a 2 ans, pour être honnête ne peut pas être appelé une DX machine mais ça marche bien surtout dans SOTA, connecté à un PC permet l’ écoute de mode digitaux, il est un peu sourd il est préférable de les connecter à des antennes amplifiées.

Bien Cordialement

Patrizio

And then, at the end of the day, it’s still all about antenna and grounding systems, isn’t it, Patrizio 😉 ?

yes Andrew, you are right, but really the BELKA receiver it is optimized for wip antenna, maybe can be used with any type of antenna, also passive, with a amplifier connected after the 9:1 balun………it this my next test.

Bonjour Patrizio

Merci pour c’est infos sur le RX BELKA. je fait beaucoup de Randonnée donc assez limité pour le transport , je vais donc acheter ce RX , j’ai PC portable 15″ et une antenne amplifié

Patrizio merci bon DX bonne écoute et bonne semaine

73S de F11CKA/SWL. Lionel

I really like the sync detection system in SDR#. You can select the sideband or use both sidebands if there is no interference present. The DSB mode gives you some diversity from the phasing sound of many shortwave AM signals.

There is another method for performing a synch detection system: You recover the carrier using a Costas Loop and then use the In-Phase side for AM DSB detection. The advantage here is that even if there never was a carrier present, the loop will lock on to the sidebands and properly center the carrier or clock.

I did some experiments in the late 1990s with a Quasi sync detector (using a limiting amplifier from an NE604 chip to recover the carrier) and a full synchronous detector (using an NE602 chip as a phase detector to reinject a carrier. The full synchronous detector sounded a lot better. Selective fading was a problem but with sufficient capacitance on the synch loop and reasonable tuning toward the center of the signal, it held sync pretty well through fades. We applied it to an FRG100 receiver. At least on local signals it sounded fantastic. For Shortwave signals, it was sort of iffy. It needed tweaking and we lost interest in trying to tweak it any further.

Yeah the (assumedly) Costas loop variation used for co-channel cancellation and the sync in SDR# is really impressive! I don’t know how Alex implemented the sync detector in the Belka. Since it’s an SDR it just mimics an equivalent of a quasi-sync circuit out of I and Q, so it likely doesn’t suffer any hardware-related problems. 🙂

“The DSB mode gives you some diversity from the phasing sound of many shortwave AM signals. ”

I thought about elaborating on that for a while before writing the article but I wasn’t quite sure if this is true:: I think it pretty much boils down to the necessity to *see* the spectrum and how the selective fading hits it before making the a decision for or against both sidebands. If you have a wide swath of phase cancellations going from one sideband to the other, DSB can (in theory) even that out a little because you mix an “intact” sideband with the affected. If you have an image like in the examples in the article above, DSB can make things worse and you could cut the number of phase cancellations in half by selecting one sideband only. But I guess on balance both probably make too little of a difference to even bother.

As I understand it, there are basically two different ways to implement sync detection. One is to amplify the heck out of whatever carrier can be detected and reinsert that into the signal. This requires a carrier to be present, but not necessarily both sidebands. The other way is to apply math magic to regenerate the carrier from the two sidebands. A carrier need not be present in this case, but both sidebands need to be there.

Sometimes I like to use the selectable sideband synchronous detecter in SDRuno to lock onto one carrier where there is a competing carrier from an off-frequency signal, but id the signals are weak, turning on the sync det just makes things noisier

Craig Siegenthaler at Kiwa Electronics is mainly known today for his excellent I.F. filters, and MW and Shortwave Loop Antennas, but he put a lot of effort into designing an add-on sync detector called the Kiwa MAP Unit that he sold for a relatively short period in the 1990s. This unit requires an 455 kHz input pickup from a tap on a 455 IF conversion receiver and then outputs sound from its own speaker and amplifier and includes Wide/Narrow filter selection. The Narrow filter is 3.5 kHz and its skirts seem really sharp. The MAP unit does not select a sideband, and never seems to lose “lock” even if you tune off center. Its build is a really high quality metal cabinet, nearly “tank like.” Mine has never had an internal circuit problem ever, and I have had it since 1997.

I wish Craig would write a description of how his version of Sync Detection for AM works.

My fuzzy memory is that the first commercial sync detectors sold were part of early TV sets as part of raster scan.

Thank you for your comment! There’s also the Sherwood SE-3 (still being sold apparently but holy..$&/§) 🙂

Indeed, sync detectors did catch on n the TVs sector earlier and that propelled the development of ICs for that, which in turn helped getting them into a few more radios before the demand picked up once again for AM stereo in the 80s.

I’ve always enjoyed the descriptions of synchronous detection from Craig:

https://www.qsl.net/n9ewo/SYNCHRONOUS_DETECTION.pdf

The reviews of his Kiwa MAP product were also quite instructive of what might go into a world -class AM demodulator. Starting on page 18 (as labeled) or page 20 (as numbered in the PDF):

https://worldradiohistory.com/Archive-DX/NASWA/90s/NASWA-1990-09.pdf

I think both those articles complement this wonderful post and its samples nicely!

Now if we could only get Craig to assist in a SDR/DSP recreation. The original MAP units are getting hard to find and pricey.

If I could have everything, maybe I’d throw in a switchable Costas loop, just cause I’ve never spent time comparing a non-toy implementation ?. Plus SDR recordings allow for easy re-play/re-demodulation to get the controls just right .

Anyone know Craig? Maybe this would make for a nice SWLing Post community project.

Oh hey, Michael! Thanks for the trip down memory lane with the mention of my Kiwa MAP article in the NASWA Journal publication! I’d totally forgotten I’d written that condensed article for them.

I no longer have a MAP unit, but when it was in development I helped Craig test various prototypes (a few in unusually-shaped, angled-front enclosures). He is a stickler for detail, and in designing the MAP he went through no less than 25 different makes and models of (bare) speakers before deciding which one had the best sound for the production MAPs.

Another fond memory was a MAP test that Craig and I did at the Washington coast during a gathering of DXers in the spring of 1990 (1991?). We were tracking the fade-out of HLAZ 1566 kHz from Cheju, South Korea. This is an FEBC missionary station which is often heard in the Pacific NW. The HLAZ station is typically heard through the evenings with Japanese or Chinese language broadcasts, using antenna beams on the appropriate bearings (e.g., well away from the USA).

We carefully compared the post-sunrise signal of HLAZ with a west-facing Beverage antenna hooked to a JRC NRD-525 receiver. HLAZ was heard for an unusually long time after local sunrise that morning. As the signal faded down with audio *just* at the noise floor on the NRD-525, we switched over to the MAP. It was clearly providing a better signal-to-noise ratio result!

A few minutes later HLAZ’s audio was gone on the NRD-525’s AM detector, but stayed audible on the MAP for 10 minutes longer before disappearing. It took another 45-60 minutes for the signal’s carrier tone in SSB mode to disappear. Again, the carrier lasted somewhat longer through the MAP than it did on the “barefoot” NRD-525.

All of us observing the test that day came away with a high opinion of Craig’s new MAP device!

In the late forties, GE sold the YRS-1, which was a synchronous detector with selectable sideband. I only saw cryptic references, until I saw the GE Ham News SSB Handbook a few years ago. There’s an article in there, complete with schematic. About 14 tubes, lots of receivers at the time had fewer.

I have no idea of price, or how little it sold. I just read there was a military version, so that likely sold more.

At the time, GE was interested in double sideband suporessed carrier, and it needed a proper detector. It made transmitters simple. So the concept was around, and GE was a source of other articles. For most hams, DSB was a cheap and simple way to go “sideband”, and based on the notion that at the other end, the receiver was for SSB, so the receiver converted the DSB to SSB, before detection.

But yes, tv demodulation of the picture carrier could use sync detectors, though I don’t know how common it was. About fifty years ago, Motorola had the MC1330P, an 8pin IC that would now be called “quasi-synchronous”, ie amplify and limit the signal, and in a mixer, mix it with the incoming signal. Later there were PLL based sync detectors aimed at the tv market.

One Racal receiver had a synchronous detector, but I’ve not seen a date of when it came out. Too expensive for most of us. A search last night says there was an Eddystone in England, but again no date.

The concept really needed ICs to be viable.

Sony added sync detection to the ICF-2010 and subsequent receivers because their multi-mode AM Stereo IC performed that function for AM Stereo in the Kahn-Hazeltine mode. They simply were able to adapt existing technology. The Sony implementation is arguably superior to others, even the modern DSP-based ones.

Icom even used the Sony AM Stereo chip in its IC-R75 receiver for its sync detection. The performance of sync on the IC-R75 pales in comparison to Sony receivers, so Icom appears to use different logic for detection. At some point, the supply of these chips disappeared. So Icom revised the design to not include sync. So the older models actually have more features.

13dka,

Very interesting article on an often confusing subject. Thanks for taking the time to explain it so thoroughly.

Tom

While there were construction articles starting in the fifties, sync detectors needed a bunch of tubes, so few were interested. It improved around 1970, ICs making it more practical.

But they weren’t in receivers that average people could buy until the Sony 2010 in the eighties. There was no demand. I have no idea why Sony put a sync detector in that receiver.

But once there, it became something to talk about, something to expect as time went on. And then to dismiss receivers without sync detectors.

I’d argue one reason to include them was for SSB. You don’t want to put a narrow filter in a portable sw receiver, because a primary use is to receive AM. But put a selectable sideband detector in, and you get half the bandwidth. In the fifties, there were “sideband slicers” like this to add to AM receivers, that needed a product detector and better selectivity for SSB. Once you have that, a bit more circuitry, easy with ICs, you’ve got synchronous detection too.

Selectable sideband, more than synchronized detection, is a vast improvement. The nearby station on 1040 dwarfs reception of WBZ on 1030. But if I kick in the sync detector on my Grundig G3, with lower sideband selected, the 1040 sttion disappears.

A big problem is that “sync detector” can be applied to a lot of different implementations. We often don’t see the circuit. I thought the Drake R7 didn’t have sync, but who knows. The R8 does, i’ve seen the circuit. Quasi-sync used to be sync, either one has a threshold to overcome. Everyone talks about “locking to the carrier”, something very obvious with the Signetics NE561 from fifty years ago. But some/many use the two sidebands to place the reinserted carrier right between, so no carrier needed.

Double sideband, suppressed carrier is a mode, but yiu need a proper receiver to demodulate it, or most people use a sideband receiver, cinverting to SSB inside tge receiver before demodulation.

Thank you for the comment, Michael!

“I thought the Drake R7 didn’t have sync, but who knows.”

They even gave it a lovely name: “Synchro-Phase”.

(At the bottom of this old R7 brochure)

http://www.wb4hfn.com/DRAKE/DrakeCatalogsBrochures/Brochure_R7_01.htm

Probably the only vintage radio I’d break my strict “don’t become a radio museum” rule for. 🙂